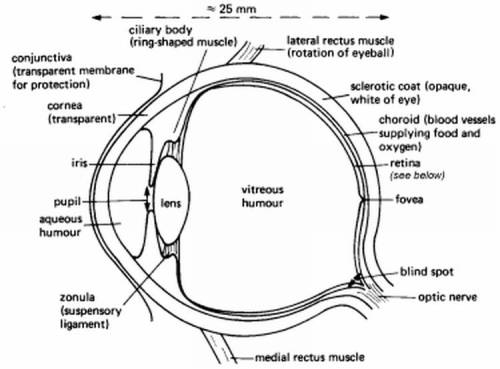

I have been following Dr Adrian Dyer’s work for about a year. I discovered him and his work while searching on the Monash University website for research on vision, ML and related fields when a friend of mine was thinking of applying for a PhD there. Dr Dyer is a vision scientist and a top expert on Bees. His present research is based on investigating aspects of insect vision and how insects make decisions in complex colored natural environments.

His current findings (published just about a month or so back) could have important cues on how to make improvements in computer based facial recognition systems.

[Thermographic Image of a Bumblebee on a flower showing temperature difference : Image Source ]

_____

When i read about Dr Dyer’s most recent work I was instantly reminded of a rather similar experiment on pigeons done almost a decade and a half back by Watanabe et al [1] .

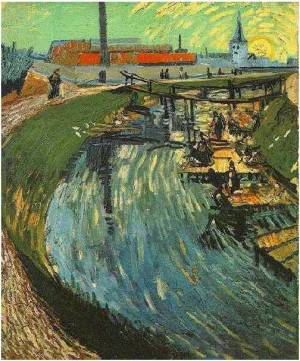

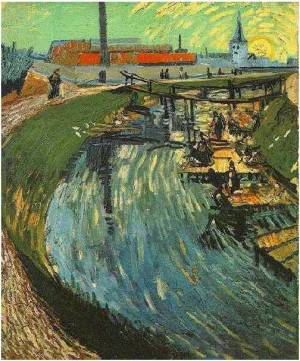

Digression: Van Gogh, Chagall and Pigeons

Allow me to take a brief digression and have a look at that classic experiment before returning to Bees. The experiment by Watanabe et al is my favorite when i have to initiate a general talk on WHAT is pattern recognition and generalization etc.

In the experiment, pigeons were placed in a skinner box and were trained to recognize paintings by two different artists (eg Van Gogh and Chagall). The training to recognize paintings by a particular artist was done by giving a reward on pecking when presented paintings by that artist.

_____

The pigeons were trained on a marked set of paintings by Van Gogh and Chagall. When tested on the SAME set they gave a discriminative ability of 95 %. Then they were presented with paintings by the same artists that they had not previously seen. The ability of the pigeons to discriminate was still about 85%, which is a pretty high recognition rate. Interestingly this performance wasn’t much off human performance.

What this means is that pigeons do not memorize pictures but they can extract patterns (the “style” of painting etc) and can generalize from already seen data to make predictions on data never seen before. Isn’t this really what Artificial Neural Networks and Support Vector Machines do? And there is a lot of research going on to work to improve the generalization of these systems.

_____

Coming Back to Bees:

Dr Adrian Dyer’s group has been working on the visual information processing in miniature brains, brains that are much smaller than a primate brain. And honeybees provide a good model for working on such brains. One reason being that a lot about their behavior is known.

Their work has mostly been on color information processing in bees and how it could lend cues for improvement in vision in robotic systems. Investigations have shown that the very small Bee brain can be taught to recognize extremely difficult/ complex objects.

_____

Color Vision in Bees [2] :

Research by Dr Dyer’s group on color vision in bees has provided key insights on the relationship between the perceptive abilities of bees and the flowers that they frequent. As an example it was known that flowers had evolved particular colors to suit the visual system of bees, but it was not known how it was that certain flower colors were extremely rare in nature. The color discrimination abilities of the Bees were tested by making a virtual meadow. There was high variability in the strategies used by bees to solve problems, however the discriminative abilities of the Bees were somewhat comparable to that of the Human sensory abilities. This research has shown not only how plants evolve flower colors to attract pollinators but also on what is the nature of color vision systems in nature and what are their limitations.

_____

Recognition of Rotated Objects (Faces) [3] :

The ability to recognize 3-Dimensional objects in natural environments is a challenging problem for visual systems especially in condition of rotation of object, variation in lighting conditions, occlusion etc. The effect of rotation on an object sometimes may cause it to look more dissimilar when compared to its rotated version than a non-rotated different object. Human and primate brains are known to recognize novel orientations of rotated objects by a method of interpolating between stored views. Primate brains perform better when presented with novel views that can be interpolated to from stored views than those novel views that required extrapolation beyond the stored views. Some animals, for example pigeons perform equally well on interpolated and extrapolated views.

To test how miniature brains, such as in Bees would deal with the problem posed by rotation of objects, Dr Dyer’s group presented Bees a face recognition task. The bees were trained for different views (0, 30 and 60 degrees) of two similar faces S1 and S2, these are enough for getting an insight on how the brain solves the problem of a view with which it has no prior experience. The Bees were trained with face images from a standard test for studying vision for human infants.

[Image Source – 3]

Group 1 of bees was trained for 0 degree orientation and then was given a non-reward test on the same view and that of a novel 30 degree view.

Group 2 was trained for 60 degree orientation and then was tested on the same view (non-reward) and a novel 30 degree view.

Group 3 was trained for both the 0 degree and 60 degree orientations and then was tested on the same and novel 30 degree interpolation visual stimuli.

Group 4 was trained with both 0 degree and 30 degree orientations and then was tested on the same and also the novel 60 degree extrapolation view.

Bees in all of the four groups were able to recognize the trained target stimuli well above the chance values. But when presented with novel views Group 1 and Group 2 could not recognize novel views, while Group 3 could. They could do so by interpolating between the 0 degree and the 60 degree views. Group 4 too could not recognize novel views presented to it, indicating that Bees could not extrapolate from the 0 and 30 degree training to recognize faces oriented at 60 degrees.

The experiment puts forth that a miniature brain can learn to recognize complex visual stimuli by experience by a method of image interpolation and averaging. However the performance is bad when the stimuli required extrapolation.

_____

Bees performed poorly on images that were inverted. But in this regard they are in good company, even humans do not perform well when images are inverted. As an illustration consider these two images:

How similar do these two inverted images look to be at first glance? Very similar? Let’s now have a look at the upright images. The one on the left below corresponds to the image on the left above.

[Image Source]

The upright images look very different, even though the inverted images looked very similar.

I would recommend an exercise on rotating the image on the right to its upright position and back, please follow this link for doing so. This was a good illustration of the fact that the human visual system does not perform AS well with inverted images.

_____

The findings show that despite the constrained neural resources of the bee brain (about 0.01 percent as compared to humans) they can perform remarkably well at complex visual recognition tasks. Dr Dyer says this could lead to improved models in artificial systems as this test is evidence that face recognition does not require an extremely advanced nervous system.

The conclusion is that the Bee brain, which is small (about 850,000 neurons) can be simulated with relative ease (as compared to what was previously thought for complex pattern analysis and recognition) for performing rather complex pattern recognition tasks.

On a lighter note, Dr Dyer also says that his findings don’t justify the adage that you should not kill a bee otherwise its nest mates will remember you to take revenge. ;-)

_____

References and Recommended Papers:

[1] Van Gogh, Chagall and Pigeons: Picture Discrimination in Pigeons and Humans in “Animal Cognition”, Springer Varlag – Shigeru Watanabe.

[2] The Evolution of Flower Signals to Attract Pollinators – Adrian Dyer.

[3] Insect Brains use Image Interpolation Mechanisms to Recognise Rotated Objects in “PLos One” – Adrian Dyer, Quoc Vuong.

[4] Honeybees can recognize complex images of Natural scenes for use as Potential Landmarks – Dyer, Rosa, Reser.

_____

Onionesque Reality Home >>

Read Full Post »